As a non-Java coder, for the last ten years I’ve stumbled my way through the JVM-centric world of "big data" (as it was called then), relying on my wits with SQL and config files to just about muddle through. One of the things that drew me to Kafka Connect was that I could build integrations between Kafka and other systems without needing to write Java, and the same again for ksqlDB and Flink SQL—now stream processing was available to mere RDBMS mortals and not just the Java adonises.

One thing defeated me though; if a connector didn’t exist for Kafka Connect, then I was stuck.

I’d resort to cobbled-together pipelines leaning heavily on kafkacat kcat, such as I did in this blog post.

I built some cool analytics on top of maritime AIS data about ships' locations, but the foundations were shaky at best:

nc ais.host.com 5631 | gpsdecode | kafkacat -b broker:9092 -PNo failure logic, no schema handling, no bueno.

What I really needed was a connector for Kafka Connect. However for that, you need Java.

I don’t write Java.

But Claude can write Java.

I’ve already waxed lyrical about the power of LLMs for coding, and written about the risks of not keeping up with this revolution in how we build things, and the limitations of what they can currently do. So far all this work with coding agents was generally within the realm of something I could eyeball (HTML/CSS), or actually debug and validate (dbt/SQL).

But what about getting a coding agent to build a connector for me?

Skillin Up 🔗

There were two things I wanted to get out of this experiment - a working connector for Kafka Connect, and a skill that I could use to have Claude build more in the future. Simply prompting it to build a connector throws away a lot of useful context and knowledge that it builds up in the process.

Since I don’t know anything about building connectors, I figured I’d just get Claude to figure it out:

Claude went away and busily set fire to a bunch of tokens. It came back like a proud six year old, having completely missed the point.

Wait, what?

provides deep knowledge of the Kafka Connect REST API and common connector configuration patterns?

Once back on course, it came up with a plan. I may not code Java, but I know enough to nudge Claude to really do a thorough job learning as much as it can, as well as reminding it about security. As a side note, this is one of the fundamental beauties of source-available code: people, and now agents, can learn from it.

After that, it was full-stream ahead, and Claude duly wrote the skill, nbd ¯\_(ツ)_/¯

# Kafka Connect — Connector Development

Build production-grade Kafka Connect source and sink connector plugins in Java.

## Workflow

1. **Gather requirements** — source or sink? external system? schema? auth? offset model?

2. **Scaffold** — Maven project, directory layout, POM dependencies

3. **Implement Connector** — config validation, task partitioning

4. **Implement Task** — data movement (poll or put), offset tracking

5. **Define configuration** — ConfigDef with types, validators, groups

6. **Handle schemas** — SchemaBuilder, Struct, logical types

7. **Security review** — credentials, logging, SSL, error sanitization

8. **Test** — unit, integration (Testcontainers), failure scenarios

9. **Package & deploy** — plugin directory or uber-JAR, install to plugin.path

[…]You can see the complete skill here.

| This is a proof of concept. Literally that. Proving a concept. Don’t use it unless you know what gaps might exist with it, both in the skill and the code that it creates. |

Claude, build me a connector 🔗

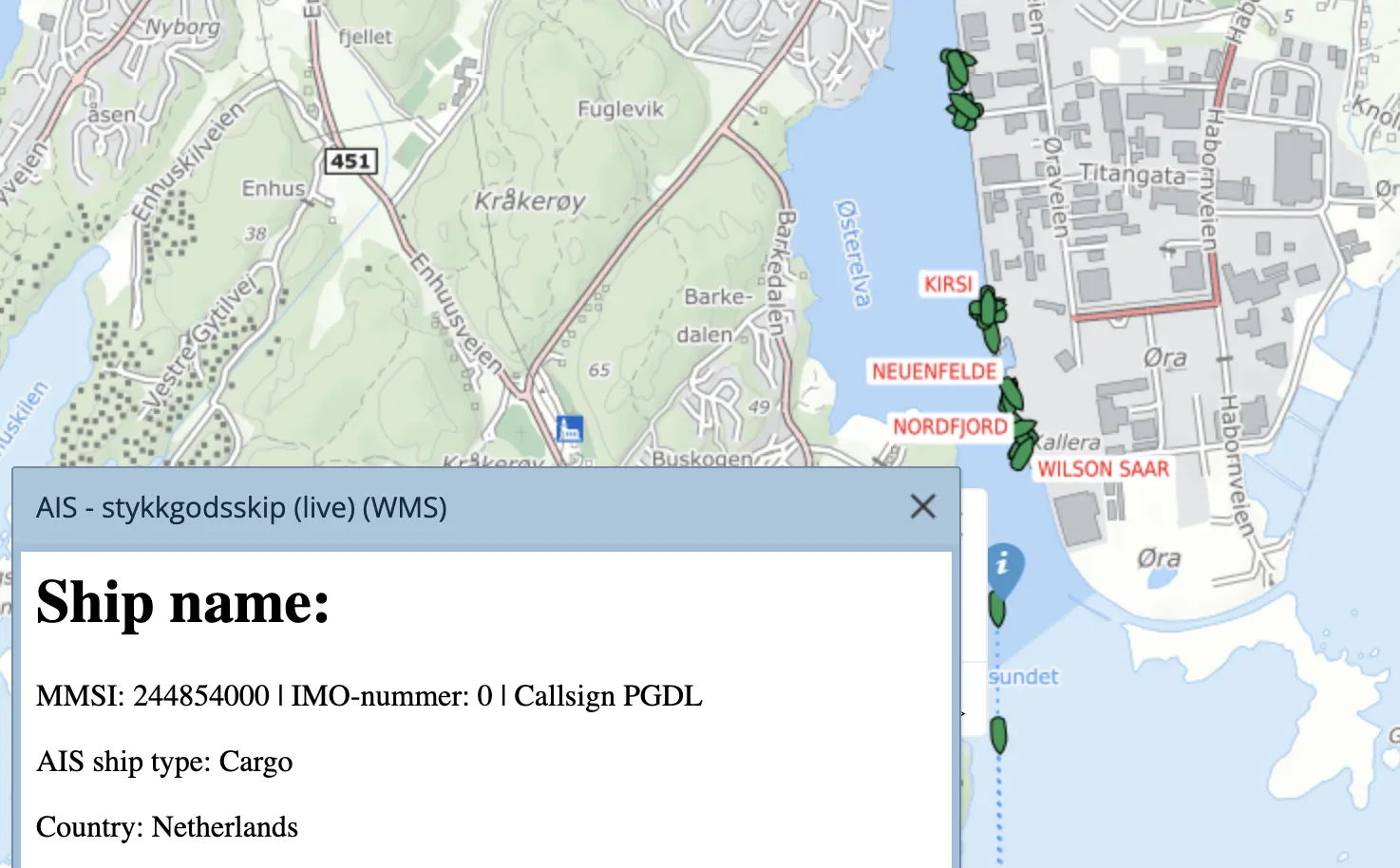

As I mentioned above, a few years ago I wrote a blog post about stream processing using AIS data as the source. This data is emitted from ships, with information about their heading etc. Unless you have an AIS receiver, you need to find a service that emits this relayed AIS data, which fortunately the Norwegian government provides for free, including a map viewer:

There’s also a TCP endpoint, which I figured Claude could use to test and validate a connector.

Claude’s thinking

The user wants to build a Kafka Connect source connector for AIS (Automatic Identification System) data. AIS is a maritime tracking system used by ships. The endpoint at 153.44.253.27:5631 appears to be a live AIS data feed.

Let me first understand what AIS data looks like and explore the codebase to see if there’s any existing work. Let me also test the endpoint to understand the data format.

I should: 1. Explore what AIS data format looks like (NMEA sentences typically) 2. Test the endpoint to see what data comes through 3. Check if there’s any existing connector or project structure

Let me start with Phase 1 - understanding.

If you’re interested in how Claude works, using Simon Willison’s claude-code-timeline tool is a great way to see quite what it gets up to. Poking around through the JSONL file that Claude writes to, you get an insight into its "thought" process:

Claude’s thinking

Now I have real AIS data from the endpoint. Let me analyze what I see:

[…]

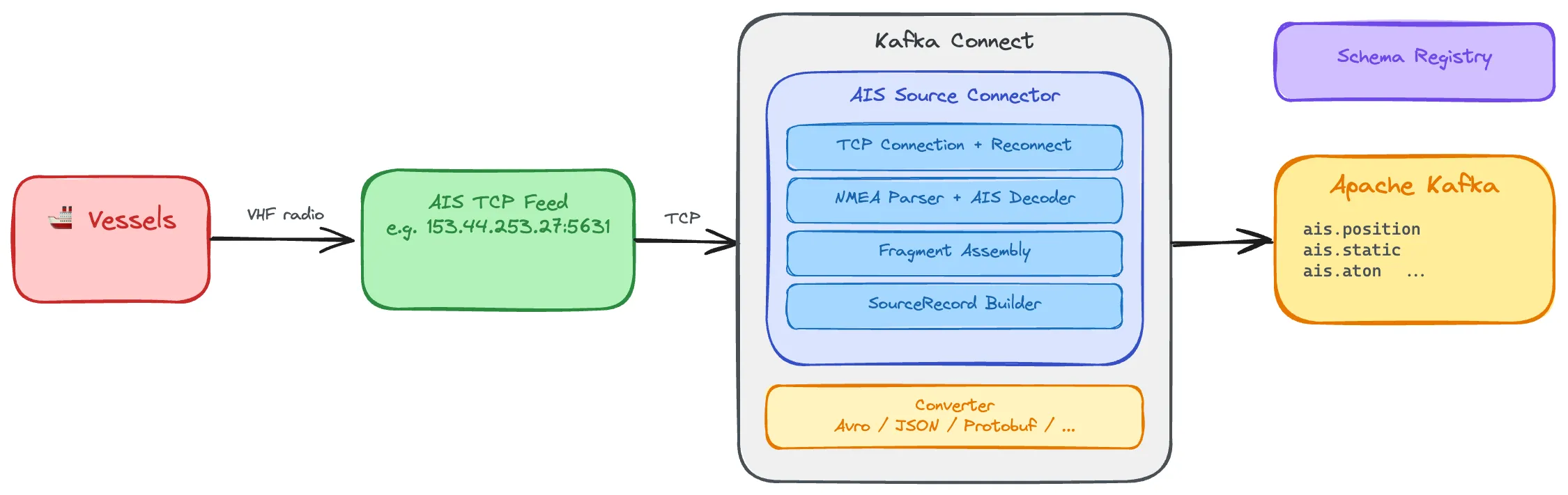

Now I’m ready to design the connector architecture. I need to build a source connector that can handle this tag block format, parse the Norwegian station identifiers, and manage multi-sentence message reassembly.

The key architectural decisions are whether to decode AIS messages internally using a Java library or pass raw NMEA downstream, how to organize the output topic structure, and how to handle the offset model for a live stream with no meaningful replay capability.

Actually, let me think about this more carefully. The user said "a source connector for AIS data" - they want a proper Kafka Connect connector. Given their existing experience with the nc+gpsdecode+kcat pipeline, they probably want something more robust and native.

How did it know about my existing nc+gpsdecode+kcat pipeline?

If you look at the prompt above, I just told it the AIS endpoint.

Turns out it had a good rummage around my existing projects!

From that it found its way to my local clone of demo-scene which includes the code it references. Clever stuff.

Its first iteration of the plan is pretty good:

Now comes the bit that Claude (or a real developer, without domain knowledge) can’t do: does it actually make sense what’s being proposed?

I picked up on its plan to write one single topic. AIS data has different record types, with different fields across them. I also wanted it to "make no mistakes" a.k.a. sense-check its architecture that it wasn’t going to bug-out on a flaky TCP connection.

Other than the ass-kissing ("Great questions!") its reply is useful:

At this point, I am full of sheer joy. As an end user of Kafka Connect and its connectors for many years I’ve often thought wouldn’t it be nice if the connector had config option <x>. And now, they can!

Claude enables you to write tools that work as you want them, not make do and adapt your workflow to fit them. For example: one topic would be fine if that’s all I could get. But I’d probably need some post-processing (e.g. Flink SQL) to split out records of interest. Or a Single Message Transform to filter and route particular message types to a sink.

Is this TCP reconnection logic good enough? No idea. But I have the—potentially dangerous—feeling of reassurance that at least Claude "understands" the requirement and is going to code for it.

After some more back-and-forth with Claude over how it handled particular AIS message types, and making sure that the connector was going to be ergonomic to work with overall, we settled on a plan.

Claude’s thinking

The user wants me to implement a Kafka Connect AIS Source Connector based on a detailed plan. Let me start by setting up the project structure and implementing each component step by step.

This is a big implementation task.

Claude then merrily chugs away and…builds the connector. In case you’re not familiar with coding agents, the key thing here is that it doesn’t just churn out code and stop. It’ll write code, and then build it and test it, and fix problems, and test it again, until it works.

And then there it was, done:

And there we have it:

kafka-connect-ais

├── docker-compose.yml

├── README.adoc

├── src

│ ├── main

│ └── test

└── target

└── kafka-connect-ais-0.1.0-SNAPSHOT.jar (1)| 1 | Look ma, I just made my own JAR! |

The Connector 🔗

GitHub repo: https://github.com/rmoff/kafka-connect-ais

(Credit: Claude, obvs)

Let’s start up Kafka, Kafka Connect, and Schema Registry:

❯ docker compose up -d --build

[+] Running 4/4

✔ Network kafka-connect-ais_default Created 0.0s

✔ Container broker Started 0.4s

✔ Container schema-registry Started 0.4s

✔ Container kafka-connect Started 0.4sConfigure kcctl for easy working with Kafka Connect:

kcctl config set-context --cluster=http://localhost:8083 localCheck that the connector plugin has loaded (my connector! that I 'wrote'! omg!!):

❯ kcctl get plugins | grep -i ais

source net.rmoff.connect.ais.AisSourceConnector 0.1.0The bare config is very simple:

{

"name": "ais-source-per-type",

"config": {

"connector.class": "net.rmoff.connect.ais.AisSourceConnector",

"ais.hosts": "153.44.253.27:5631",

"topic": "ais",

"topic.per.type": "true",

"tasks.max": "1"

}

}Create an instance of the connector using this config:

kcctl apply -f connector-ais-per-type.jsonCheck the connector is working:

❯ kcctl get connectors

NAME TYPE STATE TASKS

ais-source-per-type source RUNNING 0: RUNNING (1)| 1 | Honestly thought it would be FAILED.

Maybe this AI thing has legs. |

Now the litmus test…do we have data?

❯ kcat -b localhost:9092 -L | grep ais

topic "ais.position" with 1 partitions:

topic "ais.binary" with 1 partitions:

topic "ais.aton" with 1 partitions:

topic "ais.static" with 1 partitions:

topic "ais.safety" with 1 partitions:Well, we have topics…

❯ kcat -b localhost:9092 -t ais.static -C -s value=avro -r http://localhost:8081 | jq '.'

{

"mmsi": 258503000,

"msg_type": 5,

"receive_timestamp": 1774612034000,

"source_station": {

"string": "2573555"

},

"raw_nmea": "\\s:2573555,c:1774612034*0D\\!BSVDM,2,1,5,A,53nQiF000000hiLH000I9DPthlDr22222222220N2H633400000000000000,0*0E\n\\s:2573555,c:1774612034*0D\\!BSVDM,2,2,5,A,00000000000,2*38",

"callsign": {

"string": "LLWF"

},

"ship_name": {

"string": "FRUHOLMEN"

},

[…]and OMG we have data! 🙀

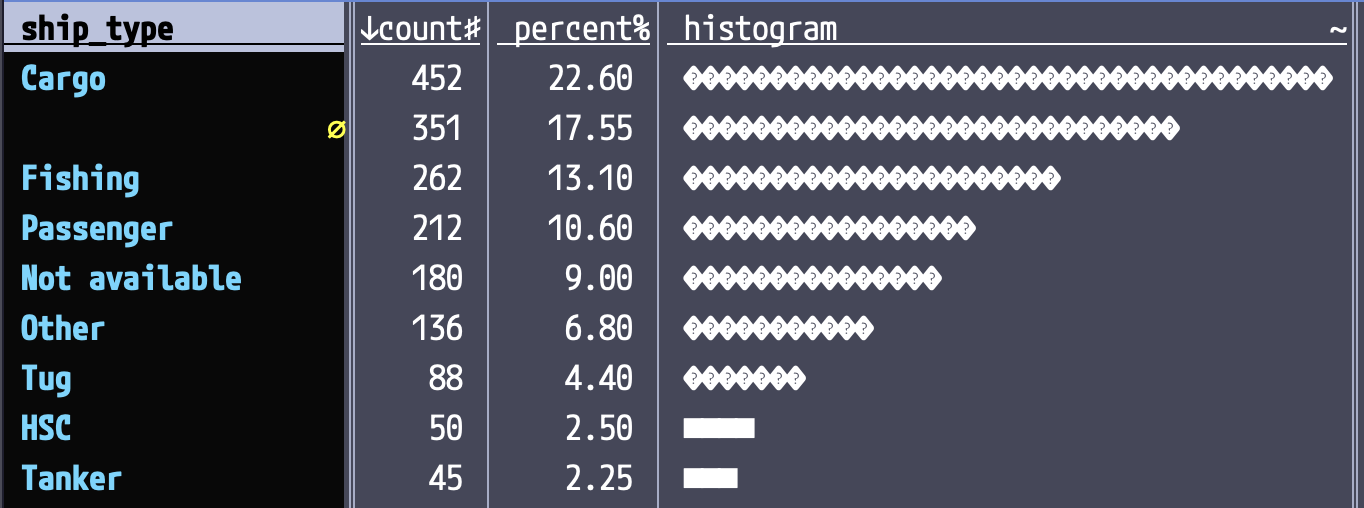

You can start to poke around the data itself and also do fun things from the terminal with visidata:

kcat -b localhost:9092 -t ais.static -C -s value=avro -r http://localhost:8081 -c 2000 | \

jq -c '{mmsi, ship_name: .ship_name.string, ship_type: .ship_type_text.string, dest: .destination.string}' | \

vd -f jsonl

Regarding this connector itself, I’ll reiterate the same as I did with the skill:

| This is a proof of concept. Literally that. Proving a concept. Don’t use it unless you know what gaps might exist with it, both in the skill and the code that it creates. |

y u hate java tho? 🔗

This is not a Java-bashing post, in the slightest.

It’s a "I don’t know this language but AI enables me to work with it".

It could just as well be a "I’m a Java programmer who doesn’t know SQL and look at what Claude wrote and wtf is this PIVOT doing"

I don’t hate Java. Language 'wars' are for imbeciles.

| You can find the connector and the skill that I built in this process here: https://github.com/rmoff/kafka-connect-ais. |