Interesting links - May 2026

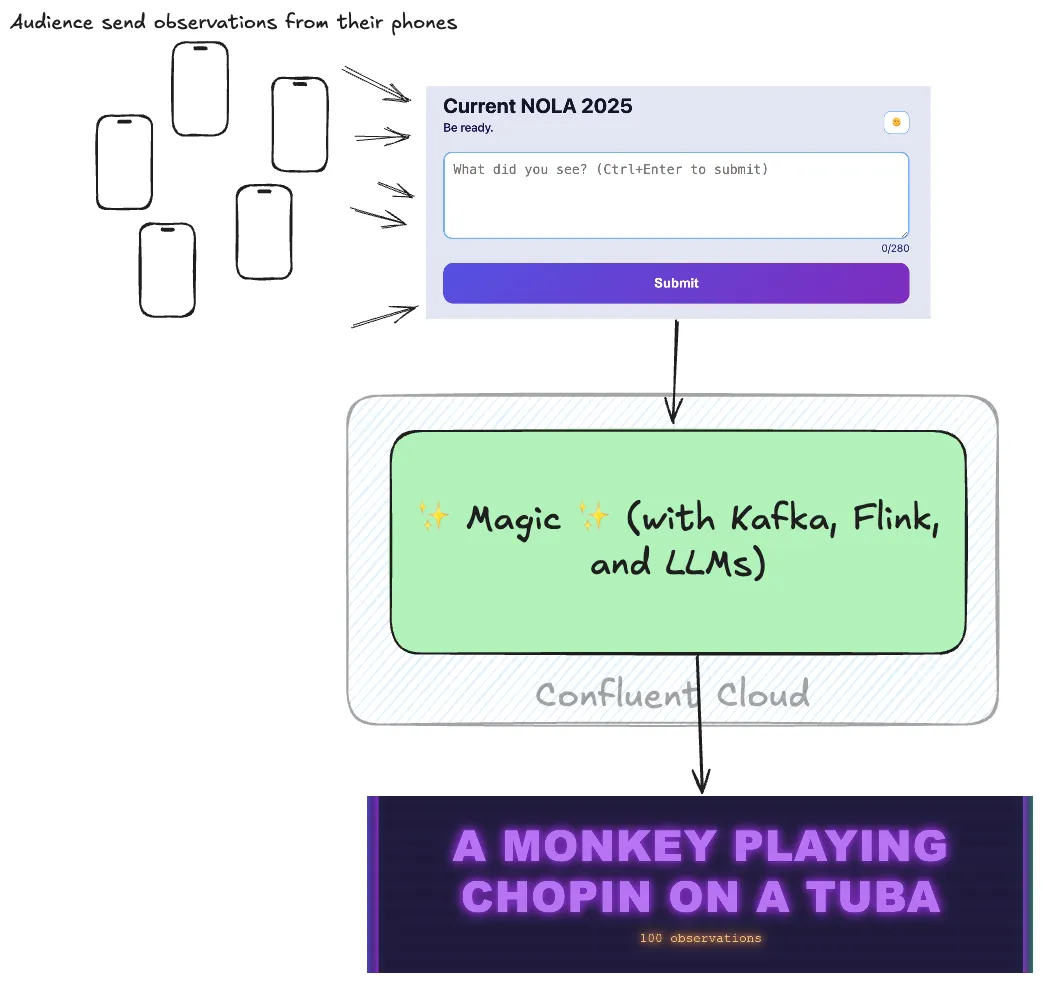

Welcome to May’s Interesting Links! This month saw the Current conference in London with the usual 5k run, lots of familiar faces and friendly conversations—and plenty of excellent breakout sessions …

Welcome to May’s Interesting Links! This month saw the Current conference in London with the usual 5k run, lots of familiar faces and friendly conversations—and plenty of excellent breakout sessions …

5k run/walk

5k run/walk

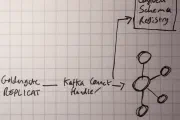

Kafka Connect

Kafka Connect

AI

AI

AI

AI

AI

AI

AI

AI

Property Graph

Property Graph

Stumbling into AI

Stumbling into AI

Stumbling into AI

Stumbling into AI

AI

AI

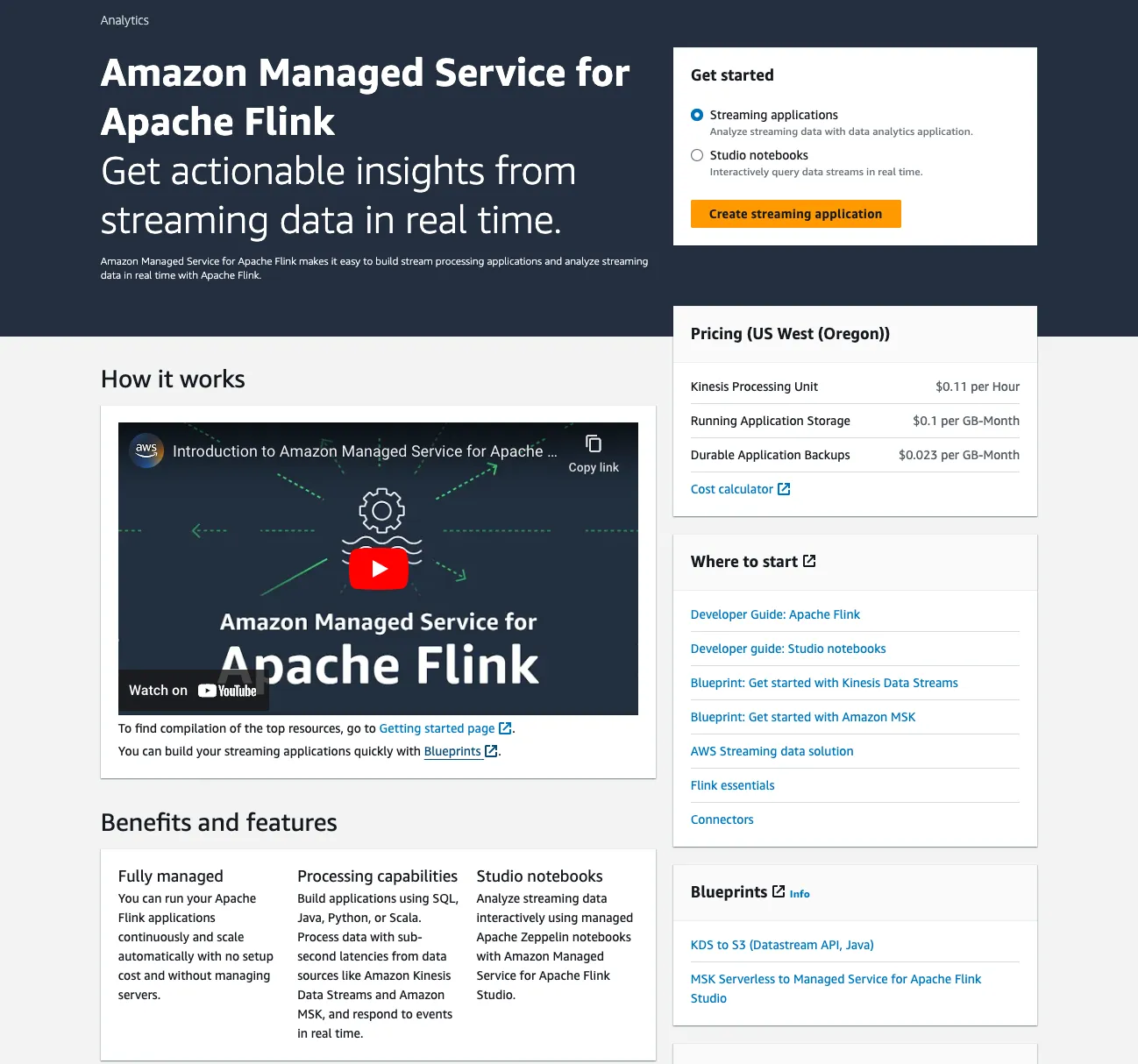

Apache Flink

Apache Flink

Apache Iceberg

Apache Iceberg

Apache Iceberg

Apache Iceberg

Flink SQL

Flink SQL

Kafka Summit

Kafka Summit

Apache Flink

Apache Flink

Apache Flink

Apache Flink

LinkedIn

LinkedIn

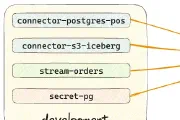

Confluent Cloud

Confluent Cloud

Blogging

Blogging

DuckDB

DuckDB

DuckDB

DuckDB

Postgres

Postgres

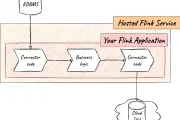

Decodable

Decodable

Apache Flink

Apache Flink

Apache Flink

Apache Flink

Apache Kafka

Apache Kafka

RSS

RSS

Apache Flink

Apache Flink

Kafka Summit

Kafka Summit

Antora

Antora

GitHub

GitHub

Antora

Antora

Flink JDBC

Flink JDBC

LAF

LAF

LAF

LAF

LAF

LAF

Streaming

Streaming

Markdown

Markdown

Documentation

Documentation

PySpark

PySpark

dbt

dbt

Data Engineering

Data Engineering

DuckDB

DuckDB

Data Engineering

Data Engineering

Data Engineering

Data Engineering

Data Engineering

Data Engineering

Airtable

Airtable

DevRel

DevRel

DevRel

DevRel

Hugo

Hugo

Kafka Summit

Kafka Summit

Productivity

Productivity

ksqlDB

ksqlDB

Confluent Cloud

Confluent Cloud

ksqlDB

ksqlDB

ActiveMQ

ActiveMQ

Kafka Connect

Kafka Connect

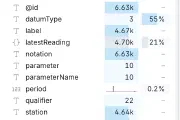

Data

Data

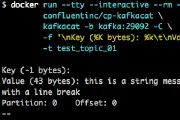

kcat (kafkacat)

kcat (kafkacat)

oracle

oracle

Kafka Connect

Kafka Connect

Kafka Connect

Kafka Connect

Kafka Connect

Kafka Connect

Kafka Connect

Kafka Connect

Blogging

Blogging

Kafka Connect

Kafka Connect

Kafka Connect

Kafka Connect

Kafka Connect

Kafka Connect

DevRel

DevRel

Kafka

Kafka

Kafka Connect

Kafka Connect

ksqlDB

ksqlDB

XML

XML

XML

XML

XML

XML

XML

XML

abcde

abcde

jq

jq

MS SQL

MS SQL

Hugo

Hugo

sqlite

sqlite

Go

Go

Go

Go

Go

Go

Go

Go

Go

Go

Go

Go

Go

Go

Go

Go

Go

Go

Go

Go

kcat (kafkacat)

kcat (kafkacat)

Go

Go

Go

Go

Go

Go

Go

Go

Go

Go

Go

Go

Go

Go

Go

Go

Go

Go

Kafka Connect

Kafka Connect

Kafka REST Proxy

Kafka REST Proxy

ksqlDB

ksqlDB

Alfred

Alfred

ksqlDB

ksqlDB

Youtube

Youtube

Confluent Cloud

Confluent Cloud

pandoc

pandoc

Kafka

Kafka

Raspberry pi

Raspberry pi

Kafka Connect

Kafka Connect

Fantastical

Fantastical

Kafka Connect

Kafka Connect

ksqlDB

ksqlDB

<x> has been compiled by a more recent version of the Java Runtime

Kafka Connect

Kafka Connect

DevRel

DevRel

RabbitMQ

RabbitMQ

Kafka

Kafka

unifi

unifi

Kafka Connect

Kafka Connect

Kafka Connect

Kafka Connect

Debezium

Debezium

Kafka Connect

Kafka Connect

Kafka Connect

Kafka Connect

kcat (kafkacat)

kcat (kafkacat)

DevRel

DevRel

Kafka Connect

Kafka Connect

Kafka Connect

Kafka Connect

Kafka Connect

Kafka Connect

Kafka Connect

Kafka Connect

Kafka Connect

Kafka Connect

Kafka Connect

Kafka Connect

bash

bash

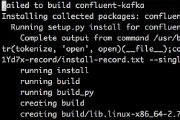

docker

docker

docker

docker

docker

docker

ksql

ksql

elasticsearch

elasticsearch

ksql

ksql

Apache Kafka

Apache Kafka

mongodb

mongodb

mongodb

mongodb

debezium

debezium

Apache Kafka

Apache Kafka

apache kafka

apache kafka

Apache Kafka

Apache Kafka

goldengate

goldengate

conferences

conferences

lsblk

lsblk

Apache Kafka

Apache Kafka

Apache Kafka

Apache Kafka

vmdk

vmdk

Apache Kafka

Apache Kafka

Apache Kafka

Apache Kafka

Apache Spark

Apache Spark

Apache Kafka

Apache Kafka

Apache Spark

Apache Spark

Apache Spark

Apache Spark

OBIEE

OBIEE

OBIEE

OBIEE

Apache Kafka

Apache Kafka

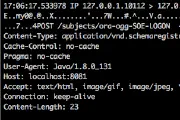

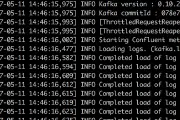

ogg

ogg

Apache Kafka

Apache Kafka

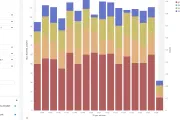

Data Visualisation

Data Visualisation

OBIEE

OBIEE

Kafka Connect

Kafka Connect

OBIEE

OBIEE

Kafka Connect

Kafka Connect

Apache Kafka

Apache Kafka

ogg

ogg

Apache Kafka

Apache Kafka

Apache Kafka

Apache Kafka

ksqlDB

ksqlDB

spark

spark

ksqlDB

ksqlDB

apache drill

apache drill

mogodb

mogodb

lxc

lxc

Apache Spark

Apache Spark

Apache Spark

Apache Spark

edgemax

edgemax

docker

docker

proxmox

proxmox

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

timelion

timelion

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

logstash

logstash

influxdb

influxdb

conferences

conferences

R

R

OBIEE

OBIEE

elasticsearch

elasticsearch

apache kafka

apache kafka

OBIEE

OBIEE

logstash

logstash

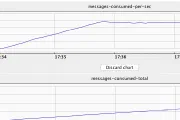

Monitoring

Monitoring

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

Elasticsearch

Elasticsearch

OBIEE

OBIEE

OBIEE

OBIEE

Elasticsearch

Elasticsearch

Elasticsearch

Elasticsearch

Linux

Linux

Linux

Linux

OBIEE

OBIEE

OBIEE

OBIEE

Elasticsearch

Elasticsearch

Elasticsearch

Elasticsearch

Linux

Linux

Elasticsearch

Elasticsearch

OBIEE

OBIEE

Monitoring

Monitoring

OBIEE

OBIEE

Monitoring

Monitoring

Monitoring

Monitoring

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

Oracle GoldenGate

Oracle GoldenGate

Monitoring

Monitoring

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

inventory

inventory

windows

windows

fedora

fedora

hp

hp

etl

etl

copy_table_stats

copy_table_stats

documentation

documentation

oracle

oracle

io

io

bi

bi

dwh

dwh

etl

etl

jmx

jmx

OBIEE

OBIEE

support

support

OBIEE

OBIEE

io

io

OBIEE

OBIEE

informatica

informatica

bi

bi

io

io

documentation

documentation

oracle

oracle

hack

hack

dac

dac

oracle

oracle

jmx

jmx

cluster

cluster

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

OBIEE

cluster

cluster

OBIEE

OBIEE

loadrunner

loadrunner

loadrunner

loadrunner

oracle

oracle

oracle

oracle

OBIEE

OBIEE

OBIEE

OBIEE

hack

hack

otn

otn

apache

apache

obia

obia

oas

oas

jmanage

jmanage

oas

oas

oas

oas

dac

dac

bi-publisher

bi-publisher

bi-publisher

bi-publisher